People Are External Processors. The AI Age Should Build Software Around That.

For decades, software has been designed around a flawed assumption: that people think internally and then use computers to record the finished result.

But anyone who has spent a serious day at a desk knows that is not how modern work actually happens.

We do not think first and compute second. We think through the computer.

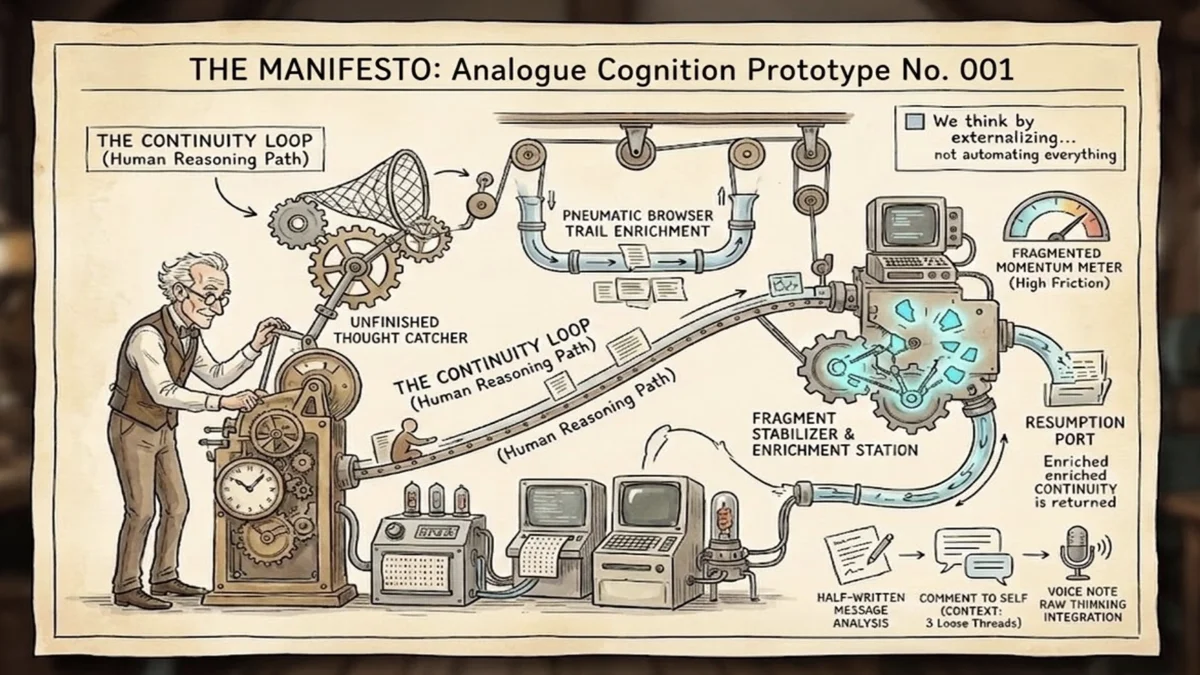

We think in open tabs. We think in half-written messages. We think in version history, scratch files, comments to ourselves, text snippets, and browser trails. We think by saying something out loud, seeing it in front of us, reacting to it, and refining it. We think by externalizing.

Computers are internal processors. People are external processors.

That distinction matters more now than ever, because the first wave of AI products has largely inherited the wrong model of cognition. Most of them assume that the user arrives with a clean prompt, a discrete task, and a coherent request. The machine waits behind a text box, ready to respond once the human has translated messy reality into polished input.

That is useful for some things. It is not enough for the actual texture of work.

The truth is that much of high-value knowledge work begins before the prompt is ready. It starts as a passing thought while coding, a realization in the middle of a meeting, a sentence spoken while walking back to the desk, or a vague sense that three loose threads probably belong together. By the time many people can articulate what they need, they have already lost signal. They have edited the thought into something cleaner but less alive.

This is where the next generation of AI software should begin.

Not with the final question. With the unfinished thought.

The real opportunity in AI is not just better answers. It is better continuity.

The people who feel this most acutely are not a niche in the traditional sense. They are developers, designers, founders, managers, consultants, writers, researchers, and operators. In other words: people whose jobs increasingly happen at the boundary between cognition and computation. They are not merely using software to execute a plan. They are using software as an extension of memory, attention, sequencing, and reflection.

That is why so many workers have already built accidental external brains for themselves. One app holds tasks. Another holds notes. Another holds chat transcripts. The browser holds research. The code editor holds intent. The calendar holds obligations. Voice notes hold raw thinking. None of these systems were designed as a coherent cognitive loop, but together they function as one.

The result is familiar: fragmented momentum.

You know you have thought about this before. You know the important insight is somewhere. You know the context exists. But recovering it takes just enough effort that the day lurches forward without it. Modern work is full of small continuity failures, and the cost of those failures compounds. You do not just lose notes. You lose state.

The software industry has spent years trying to solve this with organization. Better dashboards. Better knowledge bases. Better note-taking systems. Better search. Better task structures. Those matter, but they still often begin too late in the process. They help after thought has already hardened into an artifact.

AI creates a chance to intervene earlier.

If intelligence is ambient, local, and cheap enough to run continuously, then software no longer has to wait for the formal request. It can help catch what is fragile, enrich it in the background, and return it later when it becomes useful. It can preserve momentum instead of merely storing outputs.

That shift sounds subtle. It is not.

Designing software for external processors means building around a very different premise. The product should assume that thought emerges in fragments. It should assume that context is part of cognition. It should assume that users need help resuming, not just producing. And it should assume that organizing everything manually is itself a form of friction.

This does not mean replacing human thought with machine thought. In fact, it means respecting human thought more honestly.

Humans are not deficient because they use drafts, conversations, and artifacts to think. That is what sophisticated cognition looks like in the real world. We reason by projecting thought outward and then reshaping it. The desk, the notebook, the whiteboard, and now the computer are all parts of that loop. AI should be designed to support this external processing, not pretend it can collapse it into a single conversational window.

That has implications far beyond one app category.

It changes how we should think about note-taking, task management, meeting prep, creative tooling, developer environments, and workplace operating systems. The best AI products of the next few years may not be the ones that behave most like brilliant interns. They may be the ones that are best at preserving continuity across unfinished work.

That also suggests a more grounded product philosophy for the AI era.

The goal is not to impress the user with intelligence. The goal is to reduce the cost of staying in motion.

Good software for external processors should let people drop a fragment without breaking flow. It should make that fragment more useful before the person comes back to it. And when the user returns, the system should help them resume with more context, not more chaos.

This is a different ambition than "automate everything." It is closer to "hold the thread."

That may prove to be the more durable category.

Because as AI improves, one thing is becoming increasingly obvious: the bottleneck at the desk is not only generating answers. It is preserving coherence across the hundreds of small transitions that define a day of real work.

People are external processors.

The winners in AI software will build for that reality.